The ESG story cloud AI can't tell.

Tokens, kilowatts, and carbon sit on the same chain, but cloud AI breaks it. A practical framework for closing the Scope 2 loop on AI workloads.

Article body

Sustainability teams are being asked to account for AI workloads, and most of them are discovering the same thing at the same time: the numbers they need aren't available. Not because the maths is hard. Because the vendors they're buying from don't publish the underlying figures, and their own auditors won't accept vendor averages as primary evidence.

That isn't a niche problem. Every UK company caught by SECR, every EU company caught by CSRD, every organisation doing market-based Scope 2 accounting, has a piece of paper that says "use primary data where feasible." For AI workloads running on someone else's infrastructure, feasibility has been redefined down to "whatever the vendor chooses to tell you." This article is about closing that gap before the auditor notices.

The chain

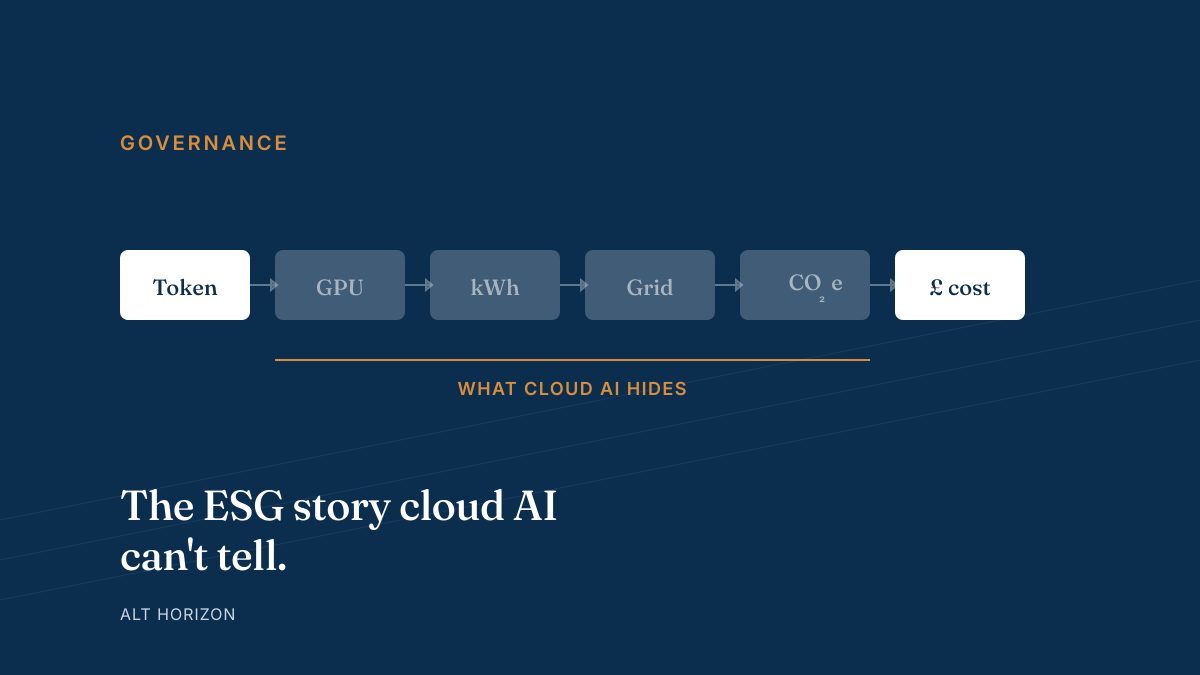

Every AI workflow produces the same trail of evidence, whether you can see it or not:

- Tokens — the prompt and response, measured in model-specific units.

- Compute — GPU seconds consumed to produce those tokens.

- Kilowatt-hours — the electrical energy drawn by that compute (and the cooling and CPU and RAM sitting alongside it).

- Grid-emission factor — how dirty a kilowatt-hour is in the jurisdiction and at the hour it was drawn.

- CO₂e — the product of the two, in kilograms or tonnes.

- Cost — the bill you see at the end of the month.

Steps one and six are visible to everyone. What happens between them determines whether your sustainability report stands up when somebody reads it carefully.

Where cloud AI breaks the chain

Send a prompt to GPT, Claude, or Gemini and you are handed the bookends: token count at the input, pounds at the output. The four middle steps are a vendor's private metric. You can estimate them. You cannot measure them.

Google is the one exception worth naming: the company publishes per-prompt figures for Gemini today — approximately 0.24 Wh, 0.03 g CO₂e, and 0.26 mL of water for a median 550-token text prompt, updated periodically. OpenAI and Anthropic publish nothing comparable. You can multiply Google's number by your volume and produce a plausible-looking row in a sustainability report, but the data is for a different model on a different provider's infrastructure, and you know it. So does a good auditor.

The gap is sharper than it looks. Cloud vendors decide what data to publish and when; they change it without warning; the published figures are rolled averages, not your workload. If your AI spend is enough to show up in a CFO review, it is enough to show up in a sustainability review too, and the evidence standards are different.

Vendor averages are not primary data. The GHG Protocol is explicit: primary data from the reporting entity's own operations takes precedence over supplier averages. For AI workloads running on an API you don't control, you don't have primary data. You have a bill.

What the frameworks actually ask for

Three frameworks capture most of the surface area for AI reporting:

SECR (Streamlined Energy & Carbon Reporting)

UK large-company and quoted-company disclosure regime in force since 2019. Requires annual reporting of energy use, associated Scope 1 and 2 emissions, and (for quoted companies) Scope 3 where material. The statutory instrument doesn't care how much of your compute runs on AI; it cares that the electricity consumption is accounted for and the emission factors are sourced. If AI workloads are material to your electricity use, they need to be in the numbers.

CSRD (Corporate Sustainability Reporting Directive)

EU directive applying to large companies and (from 2026) many non-EU companies with significant EU operations. CSRD requires reporting against the ESRS standards, including ESRS E1 on climate change. ESRS E1 mandates gross Scope 1, 2, and 3 emissions with data-quality disclosures — specifically, you must state whether figures are primary-measured, supplier-specific, or average-based, and you must explain the data quality. "Cloud vendor average" is a valid disclosure. It is also a weak one.

GHG Protocol Scope 2 guidance

The methodological backbone underneath both. Scope 2 emissions are those from purchased electricity consumed by the reporting entity. There are two accounting methods:

- Location-based — electricity consumption × the average grid-emission factor for the location and period. UK DESNZ currently publishes this at .

- Market-based — electricity consumption × the contractual emission factor (green tariff, PPA, or residual-mix where neither applies). This is the one where your renewable-energy contract actually earns you a number.

Both methods assume you know your electricity consumption for the activity being reported. Cloud AI breaks that assumption. You don't know the kWh because you don't own the meter.

What self-hosting closes

When the inference runs on hardware you own, every link in the chain becomes a number you can measure:

- GPU time — your runtime (vLLM, Ollama, a managed platform) logs per-request compute time. The Portal stores it alongside the token count and the workload ID, so you can aggregate by team, customer, or use case later.

- Kilowatt-hours — a smart PDU or a rack-level meter gives you the actual draw at the actual hour. This is primary data by any reasonable reading of the GHG Protocol.

- Grid factor — your own contractual figure if you have a green tariff or PPA; the DESNZ location-based figure if you don't. Either way, you picked it and you can defend the choice.

- CO₂e — derivable from the two, with full provenance logged alongside the figure.

None of this is novel infrastructure engineering. It is novel sustainability-reporting engineering. The measurements exist. The work is to expose them, timestamp them, and keep them in a form an auditor can trace.

A worked example

A support team processes 1,000 AI-summarised tickets per month, averaging 800 tokens per request. Consider two deployments:

Self-hosted on a Qwen3 8B-class model (RTX 5070 Ti, ~88 tokens/sec at 16K context):

- Compute time: ~9,100 GPU-seconds per month.

- Electricity: ~0.76 kWh per month (GPU + system overhead).

- CO₂e at UK DESNZ 0.192 kg/kWh: ~146 g / month.

- Every figure above is from a meter, a log, or a published factor. Your auditor can trace each one.

Cloud-equivalent (pro-rated from Google's published per-prompt benchmark):

- Estimated energy: ~0.35 kWh per month (0.24 Wh × 1,000 × 800/550 token scaling).

- Estimated CO₂e using vendor's published factor: ~44 g / month.

- Every figure above is an estimate from a supplier average of a different provider's median prompt. An auditor flags data-quality as tier 3 or 4 at best.

Read that carefully. At this workload size, the cloud figure is actually lower than self-hosted. That matters. It means self-hosting rarely wins on carbon-volume grounds at small scale — both numbers are tiny, and the cloud provider's data-centre efficiency and green-power purchasing is real. The self-hosted win here is not the absolute number. It is the data quality underneath it.

The decision lens

Three honest cases:

- Self-hosting wins on ESG volume — rare. Typically only at very high throughput where your self-hosted stack can run 24/7 on a green tariff with no idle overhead, or when cloud vendors run on dirtier grids than yours.

- Self-hosting wins on defensibility — common. Any workload that is material enough to appear in your sustainability report, where "cloud vendor average" is an uncomfortable disclosure. This is the main reason regulated buyers are moving.

- Self-hosting is neutral or loses — also common. Low-volume workloads, novel capabilities only frontier cloud models can deliver, or teams that don't yet have the operational maturity to run a GPU fleet. A hybrid pattern usually wins.

Most organisations land on hybrid. Route the bulk of volume (the routine, the sensitive, the predictable) to self-hosted where the chain is closed and the data is yours. Route the frontier slice (the once-a-week reasoning problem, the occasional long-context summarisation) to a cloud provider when capability justifies the cost. Our cost calculator has a deployment toggle that shows this split side-by-side for your own workload.

What this is, and what it isn't

It is tempting to dress this thesis up as a carbon-neutrality story. It isn't. Three limits:

- Making your AI workload measurable does not make it low-carbon. The kWh still has to come from somewhere. A green tariff or on-site renewables is still the lever that moves the number.

- Self-hosting is not a certification. SECR and CSRD both require reasonable-assurance disclosures, not a stamp. What self-hosting delivers is primary data — audit-grade evidence that reasonable assurance can actually rest on.

- The honest frame is defensibility, not virtue. If your board asks whether the AI line in the sustainability report can survive a hostile auditor, self-hosting closes the uncomfortable distance between estimate and measurement.

If your AI strategy has to survive both a CFO and a sustainability officer, "the vendor publishes an average" is not an answer.

The short version

Sustainability reporting rewards primary data. Cloud AI doesn't produce primary data for the four steps that matter. Self-hosted AI does, and that is the only remaining defensible position on AI workloads that are material to the disclosure.

Everything else — absolute kilograms of CO₂e, whether hybrid is cheaper, whether 8B is enough for your use case — is a workload-specific question. Those are the ones we scope in a Cost & ROI Model engagement, or surface live inside Horizon Portal's ESG view.

Read next

- Horizon Portal's ESG section — the same thesis as the product, with the full six-step chain diagram.

- Cost calculator — Cloud vs Self-hosted vs Hybrid side-by-side with environmental impact per option.

- Self-hosted GPU comparisons — where the benchmark £/1M-token figures in this article come from.

- Data sovereignty governance reference — the adjacent compliance question for the same hardware decision.